What's new

Three notable improvements over the previous generation:

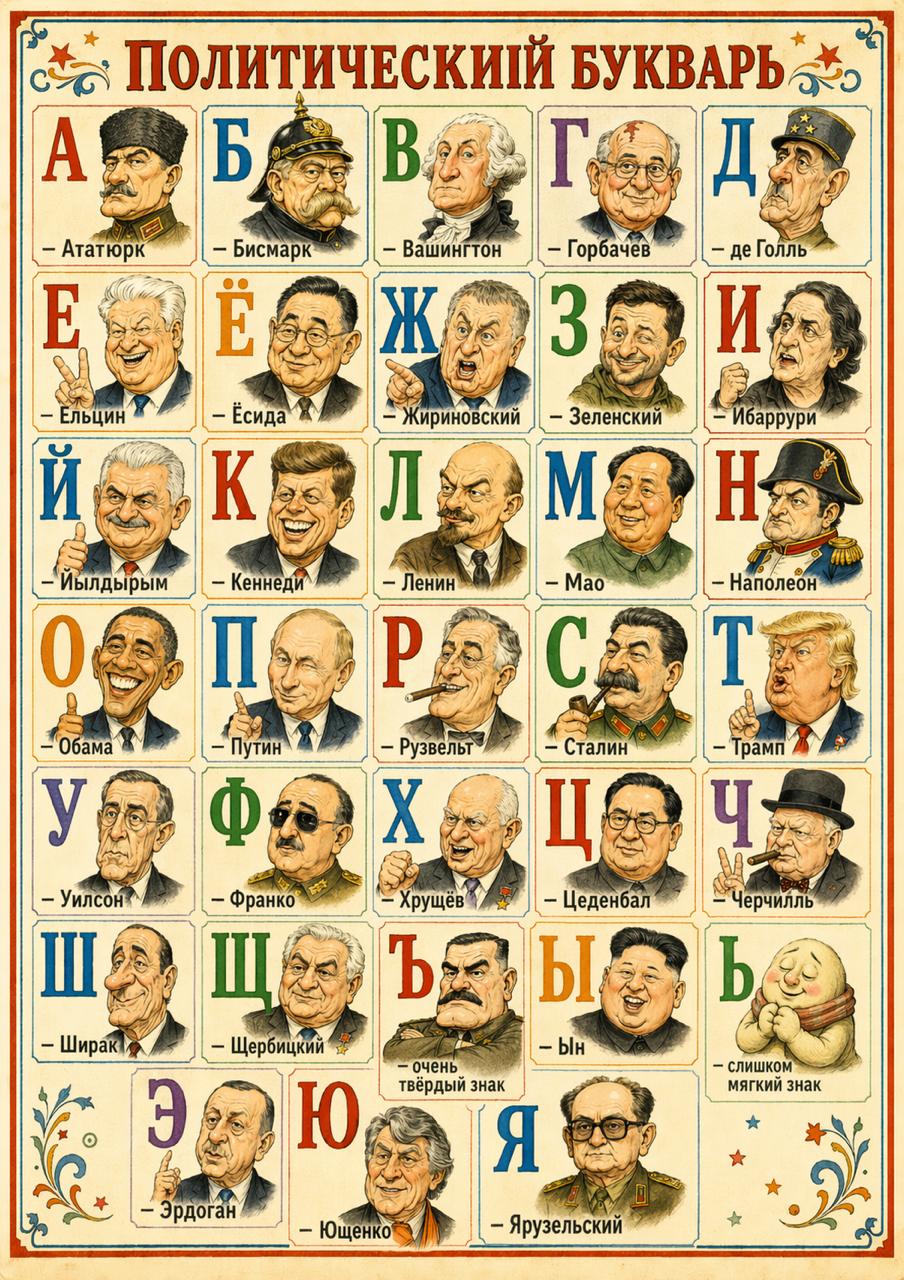

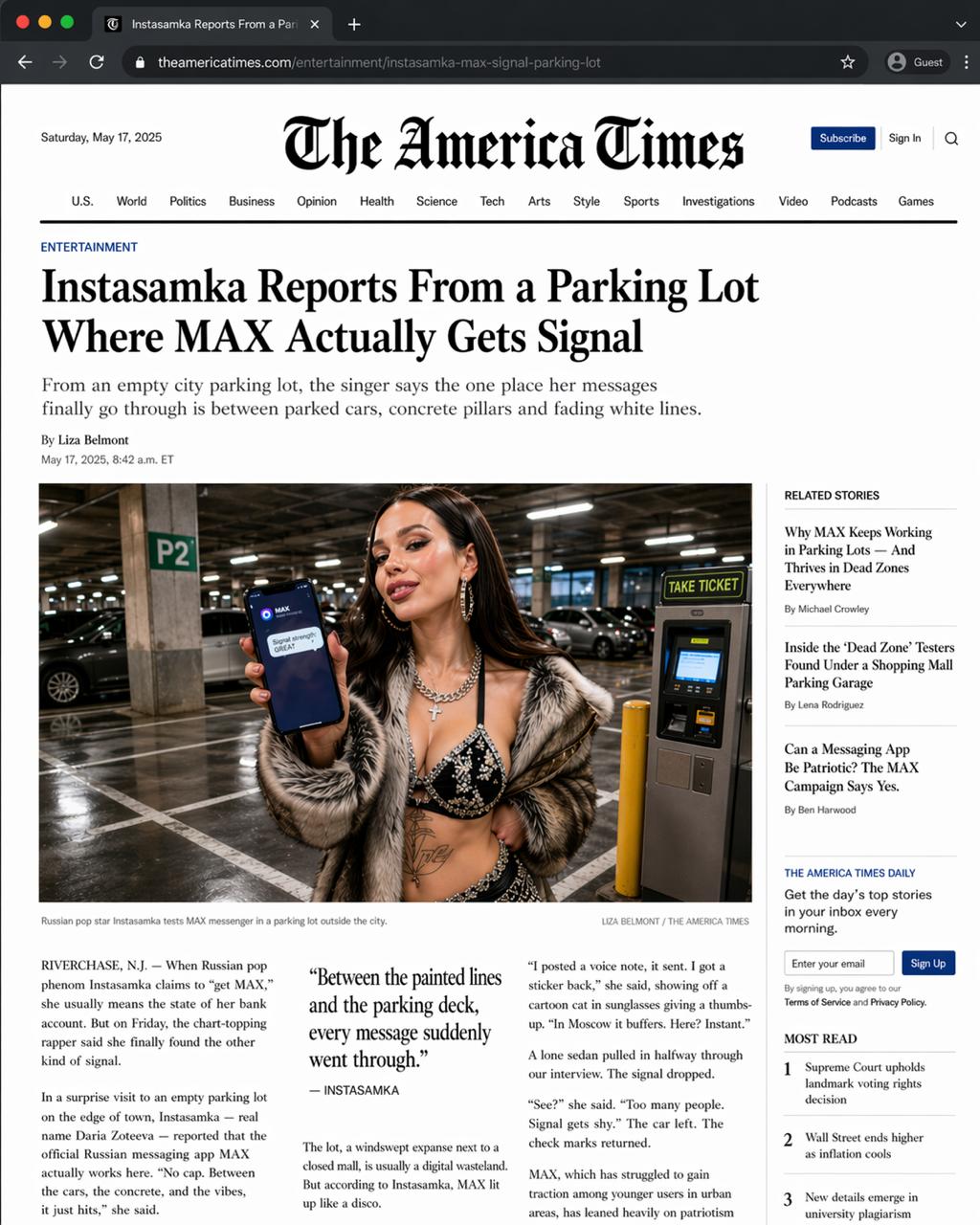

- **Text in images** — legible, clean text, no garbled gibberish. Text rendering has been a chronic weakness for all generative models; this is a noticeable step forward.

- **UI generation** — describe an interface in plain language and get a working mockup. The recommendation feed on the right came out cleanly.

- **Live web data** — the model pulls current information during generation, not just what's in its training data.

There's also extended thinking mode: give it a short prompt and the model comes up with a concept on its own and delivers a finished image — no detailed instructions needed.

Non-standard formats

OpenAI also showed how the model handles arbitrary layouts — ad banners, multi-column spreads, full newspaper pages with headlines and body copy:

Why it matters

Accurate text in images is what kept designers from using generative models for real work: logos, banners, UI mockups. Now it works. All in all — a genuinely solid release from Altman and team, nothing to be embarrassed about.

Expecting GPT-5.5 or a new model called Spud within the next couple of weeks.

OpenAI — try it via "try in ChatGPT"; works in the browser version, not in the app yet.

TechCrunch